Week 11 [Mon, Oct 28th] - Topics

Detailed Table of Contents

Guidance for the item(s) below:

While architecture is not of high importance to a small project such as the tP, it is good to know a little bit about it in case you are thrown into a larger project in future.

Introduction

Guidance for the item(s) below:

First, let us learn about multi-level design, a pre-cursor to learning about architecture.

Can explain multi-level design

In a smaller system, the design of the entire system can be shown in one place.

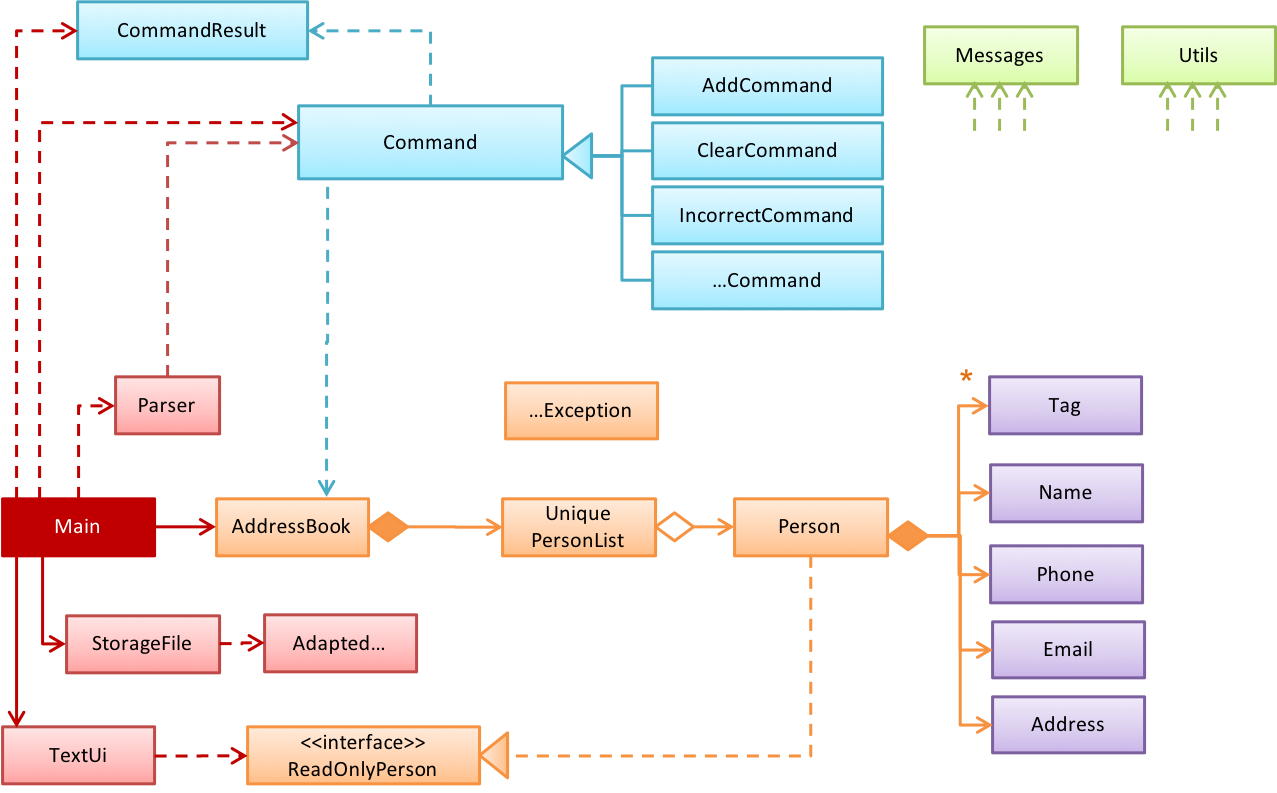

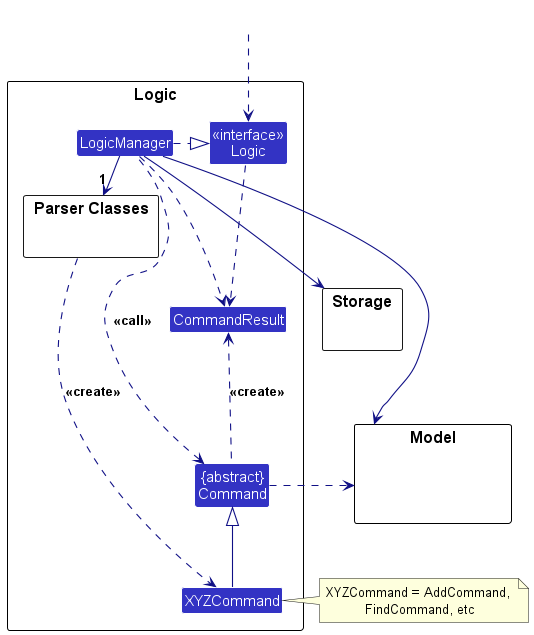

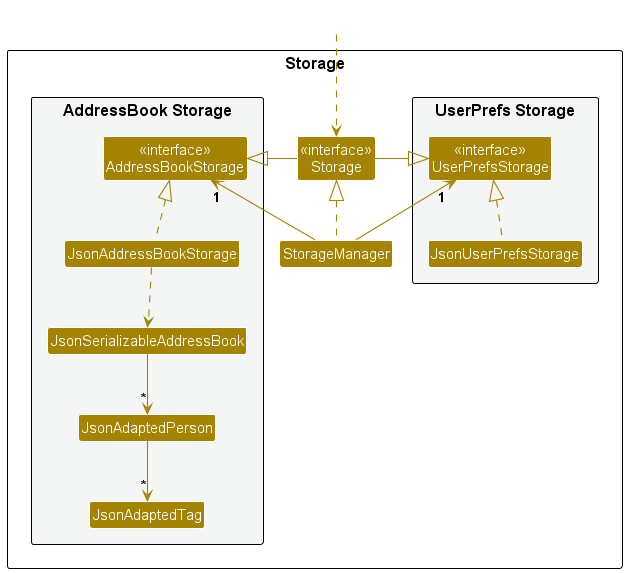

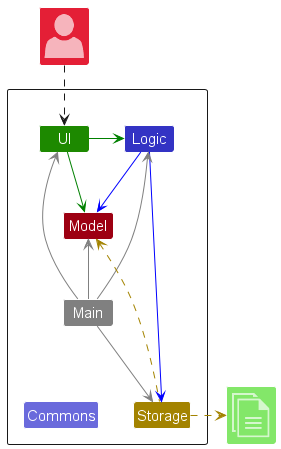

This class diagram of se-edu/addressbook-level2 depicts the design of the entire software.

The design of bigger systems needs to be done/shown at multiple levels.

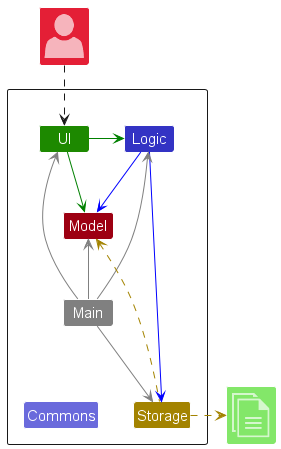

This architecture diagram of se-edu/addressbook-level3 depicts the high-level design of the software.

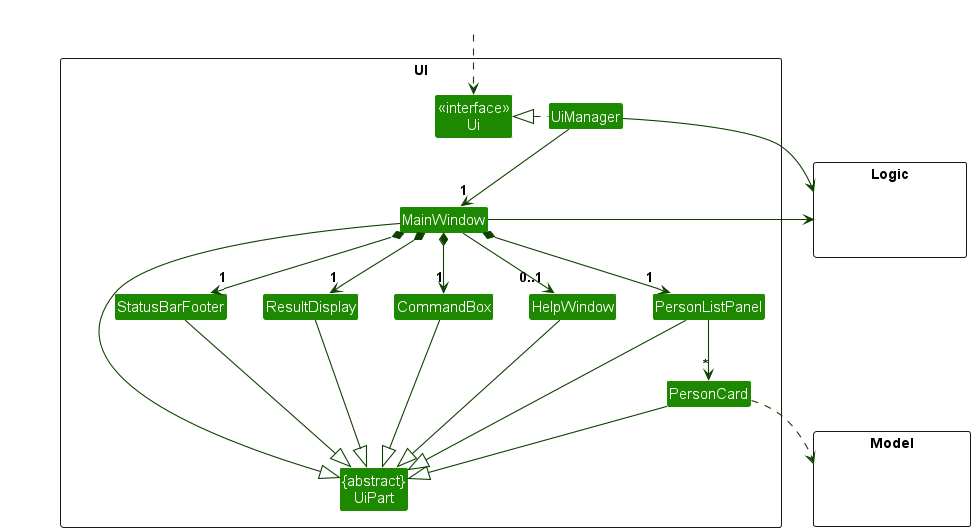

Here are examples of lower level designs of some components of the same software:

Guidance for the item(s) below:

Now that we know about multi-level design, let us learn about architecture, which is a special case of multi-level design. We also cover architecture diagrams here.

Can explain Software Architecture

The software architecture of a program or computing system is the structure or structures of the system, which comprise software elements, the externally visible properties of those elements, and the relationships among them. Architecture is concerned with the public side of interfaces; private details of elements—details having to do solely with internal implementation—are not architectural. -- Software Architecture in Practice (2nd edition), Bass, Clements, and Kazman

The software architecture shows the overall organization of the system and can be viewed as a very high-level design. It usually consists of a set of interacting components that fit together to achieve the required functionality. It should be a simple and technically viable structure that is well-understood and agreed-upon by everyone in the development team, and it forms the basis for the implementation.

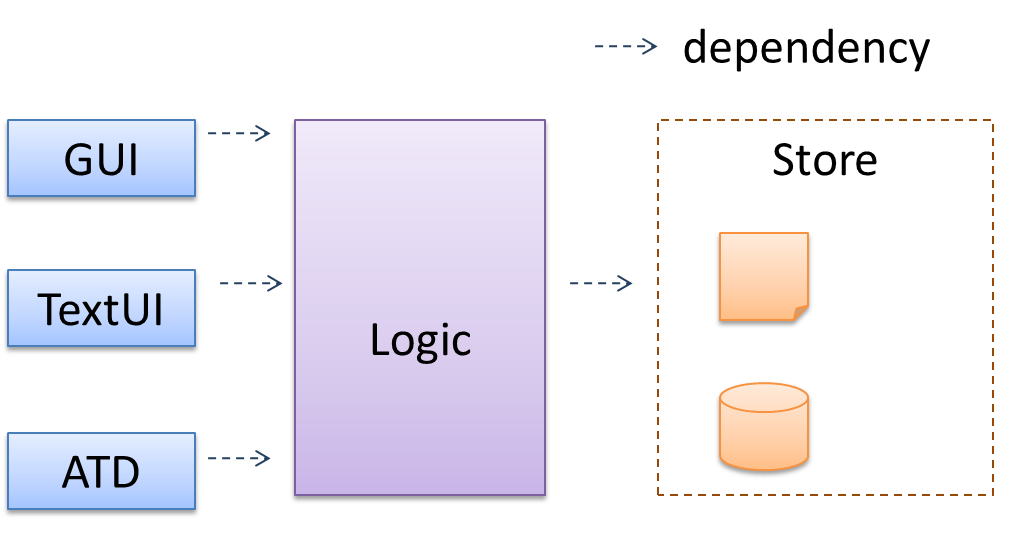

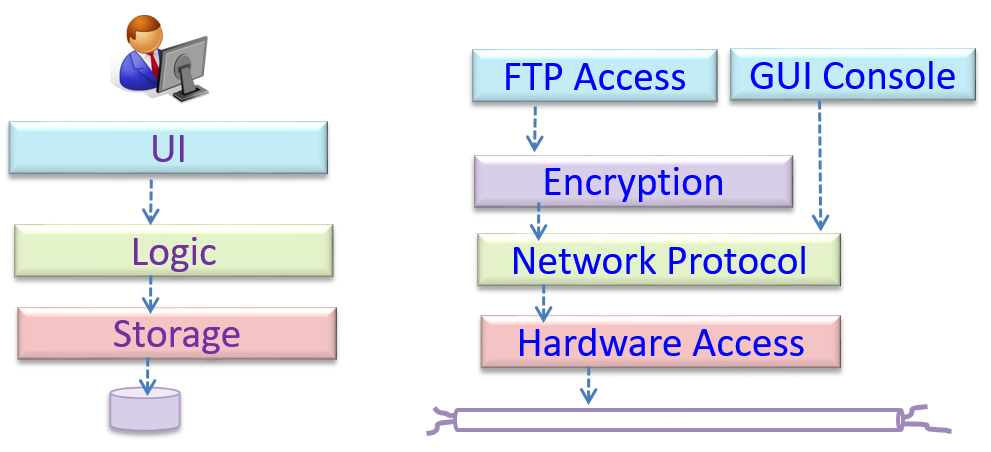

A possible architecture for a Minesweeper game:

|  |

Main components:

GUI: Graphical user interfaceTextUi: Textual user interfaceATD: An automated test driver used for testing the game logicLogic: Computation and logic of the gameStore: Storage and retrieval of game data (high scores etc.)

The architecture is typically designed by the software architect, who provides the technical vision of the system and makes high-level (i.e. architecture-level) technical decisions about the project.

Can interpret an architecture diagram

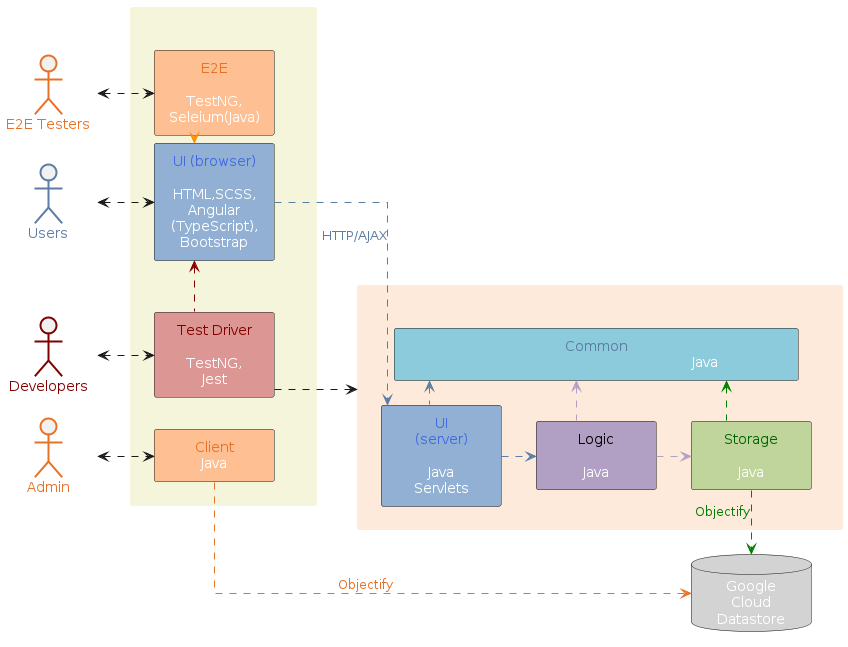

Architecture diagrams are free-form diagrams. There is no universally adopted standard notation for architecture diagrams. Any symbols that reasonably describe the architecture may be used.

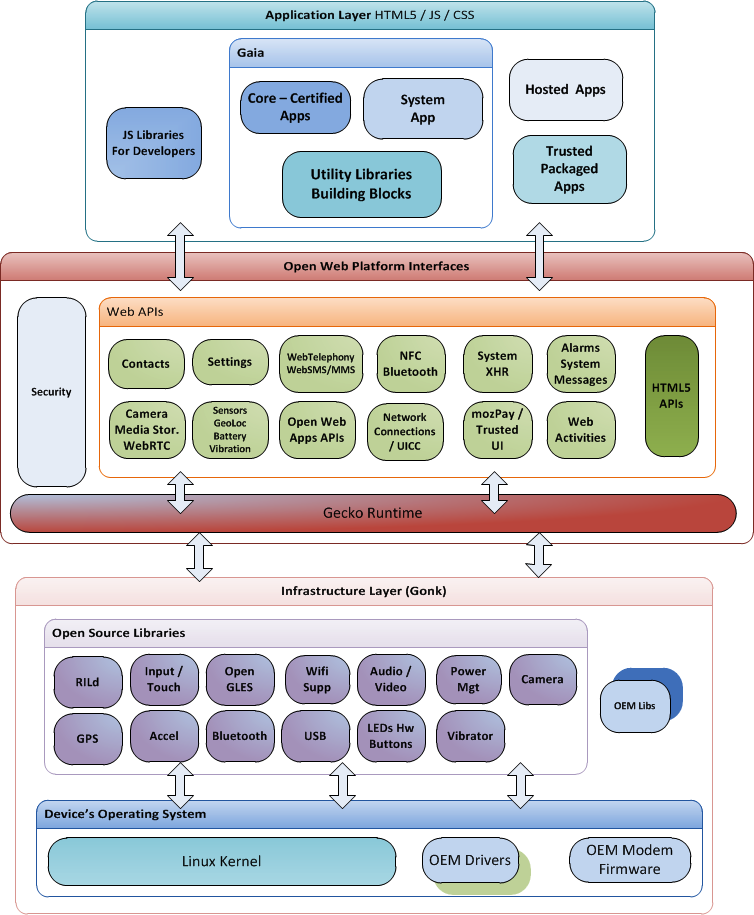

Some example architecture diagrams:

Guidance for the item(s) below:

The next topic is like 'design patterns at architecture level'. In fact, the MVC pattern you saw earlier comes close to this category too.

Architectural Styles

Can explain architectural styles

Software architectures follow various high-level styles (aka architectural patterns), just like how building architectures follow various architecture styles.

n-tier style, client-server style, event-driven style, transaction processing style, service-oriented style, pipes-and-filters style, message-driven style, broker style, ...

Can identify n-tier architectural style

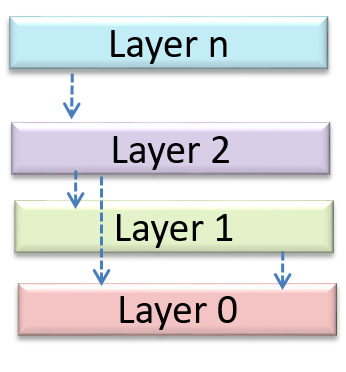

In the n-tier style, higher layers make use of services provided by lower layers. Lower layers are independent of higher layers. Other names: multi-layered, layered.

Operating systems and network communication software often use n-tier style.

Can identify the client-server architectural style

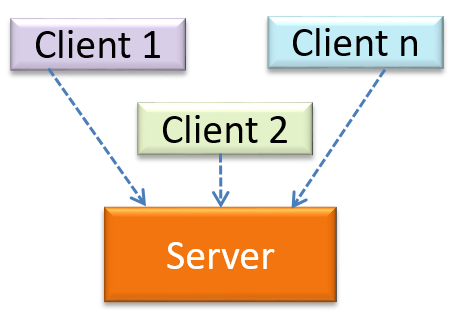

The client-server style has at least one component playing the role of a server and at least one client component accessing the services of the server. This is an architectural style used often in distributed applications.

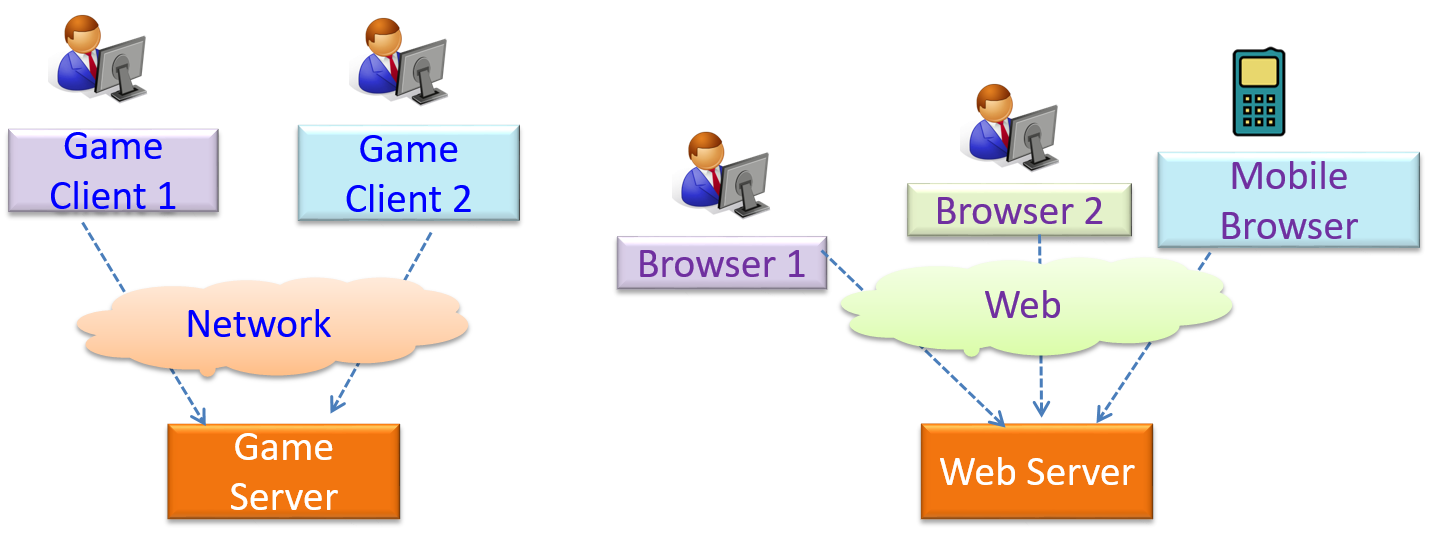

The online game and the web application below use the client-server style.

Guidance for the item(s) below:

As we approach the last part of the tP, we'll be spending more time learning about software testing. This week, we start off with an overview of different types of software testing.

Unit Testing

Can explain unit testing

Unit testing: testing individual units (methods, classes, subsystems, ...) to ensure each piece works correctly.

In OOP code, it is common to write one or more unit tests for each public method of a class.

Here are the code skeletons for a Foo class containing two methods and a FooTest class that contains unit tests for those two methods.

class Foo {

String read() {

// ...

}

void write(String input) {

// ...

}

}

class FooTest {

@Test

void read() {

// a unit test for Foo#read() method

}

@Test

void write_emptyInput_exceptionThrown() {

// a unit tests for Foo#write(String) method

}

@Test

void write_normalInput_writtenCorrectly() {

// another unit tests for Foo#write(String) method

}

}

import unittest

class Foo:

def read(self):

# ...

def write(self, input):

# ...

class FooTest(unittest.TestCase):

def test_read(self):

# a unit test for read() method

def test_write_emptyIntput_ignored(self):

# a unit test for write(string) method

def test_write_normalInput_writtenCorrectly(self):

# another unit test for write(string) method

Resources:

- Learning from Apple’s #gotofail Security Bug - How unit testing (and other good coding practices) could have prevented a major security bug.

Can use stubs to isolate an SUT from its dependencies

A proper unit test requires the unit to be tested in isolation so that bugs in the cannot influence the test i.e. bugs outside of the unit should not affect the unit tests.

If a Logic class depends on a Storage class, unit testing the Logic class requires isolating the Logic class from the Storage class.

Stubs can isolate the from its dependencies.

Stub: A stub has the same interface as the component it replaces, but its implementation is so simple that it is unlikely to have any bugs. It mimics the responses of the component, but only for a limited set of predetermined inputs. That is, it does not know how to respond to any other inputs. Typically, these mimicked responses are hard-coded in the stub rather than computed or retrieved from elsewhere, e.g. from a database.

Consider the code below:

class Logic {

Storage s;

Logic(Storage s) {

this.s = s;

}

String getName(int index) {

return "Name: " + s.getName(index);

}

}

interface Storage {

String getName(int index);

}

class DatabaseStorage implements Storage {

@Override

public String getName(int index) {

return readValueFromDatabase(index);

}

private String readValueFromDatabase(int index) {

// retrieve name from the database

}

}

Normally, you would use the Logic class as follows (note how the Logic object depends on a DatabaseStorage object to perform the getName() operation):

Logic logic = new Logic(new DatabaseStorage());

String name = logic.getName(23);

You can test it like this:

@Test

void getName() {

Logic logic = new Logic(new DatabaseStorage());

assertEquals("Name: John", logic.getName(5));

}

However, this logic object being tested is making use of a DataBaseStorage object which means a bug in the DatabaseStorage class can affect the test. Therefore, this test is not testing Logic in isolation from its dependencies and hence it is not a pure unit test.

Here is a stub class you can use in place of DatabaseStorage:

class StorageStub implements Storage {

@Override

public String getName(int index) {

if (index == 5) {

return "Adam";

} else {

throw new UnsupportedOperationException();

}

}

}

Note how the StorageStub has the same interface as DatabaseStorage, but is so simple that it is unlikely to contain bugs, and is pre-configured to respond with a hard-coded response, presumably, the correct response DatabaseStorage is expected to return for the given test input.

Here is how you can use the stub to write a unit test. This test is not affected by any bugs in the DatabaseStorage class and hence is a pure unit test.

@Test

void getName() {

Logic logic = new Logic(new StorageStub());

assertEquals("Name: Adam", logic.getName(5));

}

In addition to Stubs, there are other type of replacements you can use during testing, e.g. Mocks, Fakes, Dummies, Spies.

Resources:

- Mocks Aren't Stubs by Martin Fowler -- An in-depth article about how Stubs differ from other types of test helpers.

Guidance for the item(s) below:

Dependency Injection is a technique closely related to stubs. It is not in the syllabus but is given below in case some of you would like to know more about it.

Integration Testing

Can explain integration testing

Integration testing : testing whether different parts of the software work together (i.e. integrates) as expected. Integration tests aim to discover bugs in the 'glue code' related to how components interact with each other. These bugs are often the result of misunderstanding what the parts are supposed to do vs what the parts are actually doing.

Suppose a class Car uses classes Engine and Wheel. If the Car class assumed a Wheel can support a speed of up to 200 mph but the actual Wheel can only support a speed of up to 150 mph, it is the integration test that is supposed to uncover this discrepancy.

Can use integration testing

Integration testing is not simply a case of repeating the unit test cases using the actual dependencies (instead of the stubs used in unit testing). Instead, integration tests are additional test cases that focus on the interactions between the parts.

Suppose a class Car uses classes Engine and Wheel. Here is how you would go about doing pure integration tests:

a) First, unit test Engine and Wheel.

b) Next, unit test Car in isolation of Engine and Wheel, using stubs for Engine and Wheel.

c) After that, do an integration test for Car by using it together with the Engine and Wheel classes to ensure that Car integrates properly with the Engine and the Wheel.

In practice, developers often use a hybrid of unit+integration tests to minimize the need for stubs.

Here's how a hybrid unit+integration approach could be applied to the same example used above:

(a) First, unit test Engine and Wheel.

(b) Next, unit test Car in isolation of Engine and Wheel, using stubs for Engine and Wheel.

(c) After that, do an integration test for Car by using it together with the Engine and Wheel classes to ensure that Car integrates properly with the Engine and the Wheel. This step should include test cases that are meant to unit test Car (i.e. test cases used in the step (b) of the example above) as well as test cases that are meant to test the integration of Car with Wheel and Engine (i.e. pure integration test cases used of the step (c) in the example above).

Note that you no longer need stubs for Engine and Wheel. The downside is that Car is never tested in isolation of its dependencies. Given that its dependencies are already unit tested, the risk of bugs in Engine and Wheel affecting the testing of Car can be considered minimal.

System Testing

Can explain system testing

System testing: take the whole system and test it against the system specification.

System testing is typically done by a testing team (also called a QA team).

System test cases are based on the specified external behavior of the system. Sometimes, system tests go beyond the bounds defined in the specification. This is useful when testing that the system fails 'gracefully' when pushed beyond its limits.

Suppose the SUT is a browser that is supposedly capable of handling web pages containing up to 5000 characters. Given below is a test case to test if the SUT fails gracefully if pushed beyond its limits.

Test case: load a web page that is too big

* Input: loads a web page containing more than 5000 characters.

* Expected behavior: aborts the loading of the page

and shows a meaningful error message.

This test case would fail if the browser attempted to load the large file anyway and crashed.

System testing includes testing against non-functional requirements too. Here are some examples:

- Performance testing – to ensure the system responds quickly.

- Load testing (also called stress testing or scalability testing) – to ensure the system can work under heavy load.

- Security testing – to test how secure the system is.

- Compatibility testing, interoperability testing – to check whether the system can work with other systems.

- Usability testing – to test how easy it is to use the system.

- Portability testing – to test whether the system works on different platforms.

Can explain automated GUI testing

If a software product has a GUI (Graphical User Interface) component, all product-level testing (i.e. the types of testing mentioned above) need to be done using the GUI. However, testing the GUI is much harder than testing the CLI (Command Line Interface) or API, for the following reasons:

- Most GUIs can support a large number of different operations, many of which can be performed in any arbitrary order.

- GUI operations are more difficult to automate than API testing. Reliably automating GUI operations and automatically verifying whether the GUI behaves as expected is harder than calling an operation and comparing its return value with an expected value. Therefore, automated regression testing of GUIs is rather difficult.

- The appearance of a GUI (and sometimes even behavior) can be different across platforms and even environments. For example, a GUI can behave differently based on whether it is minimized or maximized, in focus or out of focus, and in a high resolution display or a low resolution display.

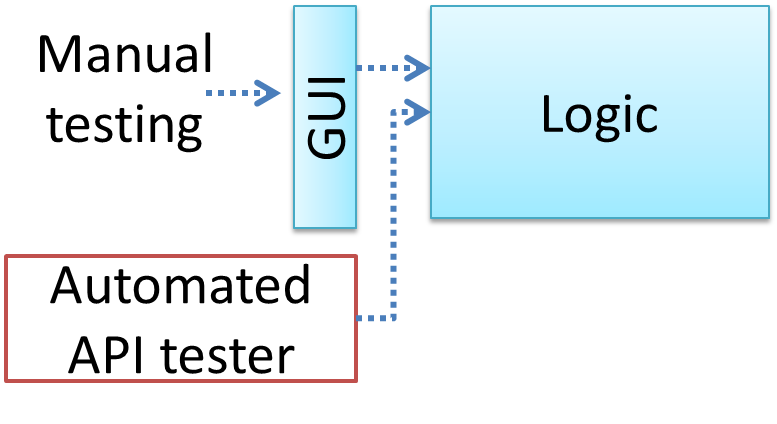

Moving as much logic as possible out of the GUI can make GUI testing easier. That way, you can bypass the GUI to test the rest of the system using automated API testing. While this still requires the GUI to be tested, the number of such test cases can be reduced as most of the system will have been tested using automated API testing.

There are testing tools that can automate GUI testing.

Some tools used for automated GUI testing:

TestFX can do automated testing of JavaFX GUIs

Visual Studio supports the ‘record replay’ type of GUI test automation.

Selenium can be used to automate testing of web application UIs

Demo video of automated testing of a web application

Acceptance Testing

Can explain acceptance testing

Acceptance testing (aka User Acceptance Testing (UAT): test the system to ensure it meets the user requirements.

Acceptance tests give an assurance to the customer that the system does what it is intended to do. Acceptance test cases are often defined at the beginning of the project, usually based on the use case specification. Successful completion of UAT is often a prerequisite to the project sign-off.

Can explain the differences between system testing and acceptance testing

Acceptance testing comes after system testing. Similar to system testing, acceptance testing involves testing the whole system.

Some differences between system testing and acceptance testing:

| System Testing | Acceptance Testing |

|---|---|

| Done against the system specification | Done against the requirements specification |

| Done by testers of the project team | Done by a team that represents the customer |

| Done on the development environment or a test bed | Done on the deployment site or on a close simulation of the deployment site |

| Both negative and positive test cases | More focus on positive test cases |

Note: negative test cases: cases where the SUT is not expected to work normally e.g. incorrect inputs; positive test cases: cases where the SUT is expected to work normally

Requirement specification versus system specification

The requirement specification need not be the same as the system specification. Some example differences:

| Requirements specification | System specification |

|---|---|

| limited to how the system behaves in normal working conditions | can also include details on how it will fail gracefully when pushed beyond limits, how to recover, etc. specification |

| written in terms of problems that need to be solved (e.g. provide a method to locate an email quickly) | written in terms of how the system solves those problems (e.g. explain the email search feature) |

| specifies the interface available for intended end-users | could contain additional APIs not available for end-users (for the use of developers/testers) |

However, in many cases one document serves as both a requirement specification and a system specification.

Passing system tests does not necessarily mean passing acceptance testing. Some examples:

- The system might work on the testbed environments but might not work the same way in the deployment environment, due to subtle differences between the two environments.

- The system might conform to the system specification but could fail to solve the problem it was supposed to solve for the user, due to flaws in the system design.

Alpha/Beta Testing

Can explain alpha and beta testing

Alpha testing is performed by the users, under controlled conditions set by the software development team.

Beta testing is performed by a selected subset of target users of the system in their natural work setting.

An open beta release is the release of not-yet-production-quality-but-almost-there software to the general population. For example, Google’s Gmail was in 'beta' for many years before the label was finally removed.

Guidance for the item(s) below:

Previously, we learned how to measure test coverage. This week, we look into how to increase coverage with the least number of test cases.

First, we take a look at test case design in general, different approaches to test case design, and few different categorization of test cases.

Guidance for the item(s) below:

What is test case design, and why should we care?

Can explain the need for deliberate test case design

Except for trivial , is not practical because such testing often requires a massive/infinite number of test cases.

Consider the test cases for adding a string object to a :

- Add an item to an empty collection.

- Add an item when there is one item in the collection.

- Add an item when there are 2, 3, .... n items in the collection.

- Add an item that has an English, a French, a Spanish, ... word.

- Add an item that is the same as an existing item.

- Add an item immediately after adding another item.

- Add an item immediately after system startup.

- ...

Exhaustive testing of this operation can take many more test cases.

Program testing can be used to show the presence of bugs, but never to show their absence! --Edsger Dijkstra

Every test case adds to the cost of testing. In some systems, a single test case can cost thousands of dollars e.g. on-field testing of flight-control software. Therefore, test cases need to be designed to make the best use of testing resources. In particular:

Testing should be effective i.e., it finds a high percentage of existing bugs e.g., a set of test cases that finds 60 defects is more effective than a set that finds only 30 defects in the same system.

Testing should be efficient i.e., it has a high rate of success (bugs found/test cases) a set of 20 test cases that finds 8 defects is more efficient than another set of 40 test cases that finds the same 8 defects.

For testing to be , each new test you add should be targeting a potential fault that is not already targeted by existing test cases. There are test case design techniques that can help us improve the E&E of testing.

Can explain exploratory testing and scripted testing

Here are two alternative approaches to testing a software: Scripted testing and Exploratory testing.

Scripted testing: First write a set of test cases based on the expected behavior of the SUT, and then perform testing based on that set of test cases.

Exploratory testing: Devise test cases on-the-fly, creating new test cases based on the results of the past test cases.

Exploratory testing is ‘the simultaneous learning, test design, and test execution’ [source: bach-et-explained] whereby the nature of the follow-up test case is decided based on the behavior of the previous test cases. In other words, running the system and trying out various operations. It is called exploratory testing because testing is driven by observations during testing. Exploratory testing usually starts with areas identified as error-prone, based on the tester’s past experience with similar systems. One tends to conduct more tests for those operations where more faults are found.

Here is an example thought process behind a segment of an exploratory testing session:

“Hmm... looks like feature x is broken. This usually means feature n and k could be broken too; you need to look at them soon. But before that, you should give a good test run to feature y because users can still use the product if feature y works, even if x doesn’t work. Now, if feature y doesn’t work 100%, you have a major problem and this has to be made known to the development team sooner rather than later...”

Exploratory testing is also known as reactive testing, error guessing technique, attack-based testing, and bug hunting.

Can explain the choice between exploratory testing and scripted testing

Which approach is better – scripted or exploratory? A mix is better.

The success of exploratory testing depends on the tester’s prior experience and intuition. Exploratory testing should be done by experienced testers, using a clear strategy/plan/framework. Ad-hoc exploratory testing by unskilled or inexperienced testers without a clear strategy is not recommended for real-world non-trivial systems. While exploratory testing may allow us to detect some problems in a relatively short time, it is not prudent to use exploratory testing as the sole means of testing a critical system.

Scripted testing is more systematic, and hence, likely to discover more bugs given sufficient time, while exploratory testing would aid in quick error discovery, especially if the tester has a lot of experience in testing similar systems.

In some contexts, you will achieve your testing mission better through a more scripted approach; in other contexts, your mission will benefit more from the ability to create and improve tests as you execute them. I find that most situations benefit from a mix of scripted and exploratory approaches. --[source: bach-et-explained]

Can explain positive and negative test cases

A positive test case is when the test is designed to produce an expected/valid behavior. On the other hand, a negative test case is designed to produce a behavior that indicates an invalid/unexpected situation, such as an error message.

Consider the testing of the method print(Integer i) which prints the value of i.

- A positive test case:

i == new Integer(50); - A negative test case:

i == null;

Guidance for the item(s) below:

How much information about the code is being used when designing test cases?

Can explain black box and glass box test case design

Test case design can be of three types, based on how much of the SUT's internal details are considered when designing test cases:

Black-box (aka specification-based or responsibility-based) approach: test cases are designed exclusively based on the SUT’s specified external behavior.

White-box (aka glass-box or structured or implementation-based) approach: test cases are designed based on what is known about the SUT’s implementation, i.e. the code.

Gray-box approach: test case design uses some important information about the implementation. For example, if the implementation of a sort operation uses different algorithms to sort lists shorter than 1000 items and lists longer than 1000 items, more meaningful test cases can then be added to verify the correctness of both algorithms.

Black-box and white-box testing

Can explain test case design for use case based testing

Use cases can be used for system testing and acceptance testing. For example, the main success scenario can be one test case while each variation (due to extensions) can form another test case. However, note that use cases do not specify the exact data entered into the system. Instead, it might say something like user enters his personal data into the system. Therefore, the tester has to choose data by considering equivalence partitions and boundary values. The combinations of these could result in one use case producing many test cases.

To increase the E&E of testing, high-priority use cases are given more attention. For example, a scripted approach can be used to test high-priority test cases, while an exploratory approach is used to test other areas of concern that could emerge during testing.

Guidance for the item(s) below:

Next, a heuristic used for improving the quality of test cases.

Can explain equivalence partitions

Consider the testing of the following operation.

isValidMonth(m) : returns true if m (an int) is in the range [1..12]

It is inefficient and impractical to test this method for all integer values [-MIN_INT to MAX_INT]. Fortunately, there is no need to test all possible input values. For example, if the input value 233 fails to produce the correct result, the input 234 is likely to fail too; there is no need to test both.

In general, most SUTs do not treat each input in a unique way. Instead, they process all possible inputs in a small number of distinct ways. That means a range of inputs is treated the same way inside the SUT. Equivalence partitioning (EP) is a test case design technique that uses the above observation to improve the E&E of testing.

Equivalence partition (aka equivalence class): A group of test inputs that are likely to be processed by the SUT in the same way.

By dividing possible inputs into equivalence partitions you can,

- avoid testing too many inputs from one partition. Testing too many inputs from the same partition is unlikely to find new bugs. This increases the efficiency of testing by reducing redundant test cases.

- ensure all partitions are tested. Missing partitions can result in bugs going unnoticed. This increases the effectiveness of testing by increasing the chance of finding bugs.

Can apply EP for pure functions

Equivalence partitions (EPs) are usually derived from the specifications of the SUT.

These could be EPs for the isValidMonth example:

- [MIN_INT ... 0]: below the range that produces

true(producesfalse) - [1 … 12]: the range that produces

true - [13 … MAX_INT]: above the range that produces

true(producesfalse)

When the SUT has multiple inputs, you should identify EPs for each input.

Consider the method duplicate(String s, int n): String which returns a String that contains s repeated n times.

Example EPs for s:

- zero-length strings

- string containing whitespaces

- ...

Example EPs for n:

0- negative values

- ...

An EP may not have adjacent values.

Consider the method isPrime(int i): boolean that returns true if i is a prime number.

EPs for i:

- prime numbers

- non-prime numbers

Some inputs have only a small number of possible values and a potentially unique behavior for each value. In those cases, you have to consider each value as a partition by itself.

Consider the method showStatusMessage(GameStatus s): String that returns a unique String for each of the possible values of s (GameStatus is an enum). In this case, each possible value of s will have to be considered as a partition.

Note that the EP technique is merely a heuristic and not an exact science, especially when applied manually (as opposed to using an automated program analysis tool to derive EPs). The partitions derived depend on how one ‘speculates’ the SUT to behave internally. Applying EP under a glass-box or gray-box approach can yield more precise partitions.

Consider the EPs given above for the method isValidMonth. A different tester might use these EPs instead:

- [1 … 12]: the range that produces

true - [all other integers]: the range that produces

false

Some more examples:

| Specification | Equivalence partitions |

|---|---|

| [ |

| [ |

Can apply EP for OOP methods

When deciding EPs of OOP methods, you need to identify the EPs of all data participants that can potentially influence the behaviour of the method, such as,

- the target object of the method call

- input parameters of the method call

- other data/objects accessed by the method such as global variables. This category may not be applicable if using the black box approach (because the test case designer using the black box approach will not know how the method is implemented).

Consider this method in the DataStack class:

push(Object o): boolean

- Adds

oto the top of the stack if the stack is not full. - Returns

trueif the push operation was a success. - Throws

MutabilityExceptionif the global flagFREEZE==true.InvalidValueExceptionifois null.

EPs:

DataStackobject: [full] [not full]o: [null] [not null]FREEZE: [true][false]

Consider a simple Minesweeper app. What are the EPs for the newGame() method of the Logic component?

As newGame() does not have any parameters, the only obvious participant is the Logic object itself.

Note that if the glass-box or the grey-box approach is used, other associated objects that are involved in the method might also be included as participants. For example, the Minefield object can be considered as another participant of the newGame() method. Here, the black-box approach is assumed.

Next, let us identify equivalence partitions for each participant. Will the newGame() method behave differently for different Logic objects? If yes, how will it differ? In this case, yes, it might behave differently based on the game state. Therefore, the equivalence partitions are:

PRE_GAME: before the game starts, minefield does not exist yetREADY: a new minefield has been created and the app is waiting for the player’s first moveIN_PLAY: the current minefield is already in useWON,LOST: let us assume thatnewGame()behaves the same way for these two values

Consider the Logic component of the Minesweeper application. What are the EPs for the markCellAt(int x, int y) method? The partitions in bold represent valid inputs.

Logic: PRE_GAME, READY, IN_PLAY, WON, LOSTx: [MIN_INT..-1] [0..(W-1)] [W..MAX_INT] (assuming a minefield size of WxH)y: [MIN_INT..-1] [0..(H-1)] [H..MAX_INT]Cellat(x,y): HIDDEN, MARKED, CLEARED